Learn how to validate your startup idea before you build it. This guide covers the 7 most effective market validation methods — from customer interviews and landing page tests to paid pilots — with real examples, step-by-step instructions, benchmarks, and the exact framework to know whether your idea is worth pursuing.

In 2008, Drew Houston had an idea for a file-syncing service. Before writing any code, he published a three-minute explainer video describing what the product would do. Within 24 hours, over 70,000 people signed up for the waitlist. That signal — tens of thousands of people raising their hands for something that didn't exist yet — gave Houston the confidence (and the investor leverage) to build Dropbox.

Compare that to Juicero. The company raised $120 million in venture capital to build a Wi-Fi-connected juicer that squeezed proprietary juice packs. The product was beautifully engineered. The packaging was premium. The branding was slick. And it failed spectacularly — because nobody actually needed a $400 internet-connected juicer when they could squeeze the packs by hand. The market didn't want it, and nobody bothered to check before spending nine figures.

These two stories illustrate the single most important lesson in entrepreneurship: validation before construction. The founders who check whether anyone wants what they're building before they build it save themselves months of wasted effort and thousands of dollars. The founders who skip this step join the 42% of startups that fail because there was no market need — the number one cause of startup failure according to CB Insights' analysis of over 100 post-mortems.

This guide is a practical, step-by-step framework for validating your startup idea in 2026. Not theory. Not motivational fluff. Concrete methods you can execute this week, with real benchmarks to know whether your results mean "go" or "stop."

What Is Market Validation?

Market validation is the process of testing whether real people have a genuine need for your product or service before you invest significant time and money building it. It's the bridge between "I think this is a good idea" and "I have evidence this is a good idea."

Effective validation answers four fundamental questions:

Does the problem exist? Is the pain point you want to solve real, frequent, and intense enough that people actively seek solutions for it? A problem that's merely annoying is different from a problem that costs people time, money, or opportunity every week.

Do people want a solution? Even if the problem exists, some problems are tolerable. People might acknowledge the pain but not care enough to change their behavior or spend money on a fix. Validation measures whether desire for a solution translates into action.

Will they pay for your specific solution? Wanting a solution and wanting your solution are different things. Maybe the problem is real but your approach to solving it doesn't resonate. Maybe the market already has good-enough alternatives. Validation tests whether your particular product concept is the one people choose.

Can you reach them affordably? A validated problem with a validated solution still fails if you can't cost-effectively find and acquire customers. Validation should include early signals about distribution — can you actually reach the people who need this?

Market Validation vs. Idea Validation vs. Product Validation

These terms are often used interchangeably, but they represent different stages of the validation process.

Idea validation is the earliest stage. You're testing whether the general concept has merit — does the problem exist, is the market big enough, is the timing right? This is primarily research-based: analyzing search trends, reading forum discussions, studying competitors, and conducting discovery interviews.

Market validation goes deeper. You're testing whether a specific target market will engage with your proposed solution. This involves putting something in front of potential customers — a landing page, a prototype, a waitlist — and measuring their response. Are they signing up? Are they sharing it? Are they offering to pay?

Product validation happens after you've built something. You're testing whether the actual product delivers on its promise. This is where metrics like activation rate, retention, and Net Promoter Score come in. It's the domain of product-market fit measurement (covered in our PMF guide).

This guide focuses primarily on idea validation and market validation — the work you do before building, or very early in the building process.

Why Market Validation Matters More in 2026

Validation has always been important, but several factors make it especially critical right now.

Building is cheaper and faster than ever. AI-assisted development, no-code platforms, and modern SaaS infrastructure mean you can go from idea to functional product in weeks rather than months. That sounds like a good thing, and it is — but it also means the barrier to entry is lower for everyone. More products are launching into every category, which means competition is fiercer and the cost of building the wrong thing is primarily opportunity cost: the months you spent building Product A when you should have been building Product B.

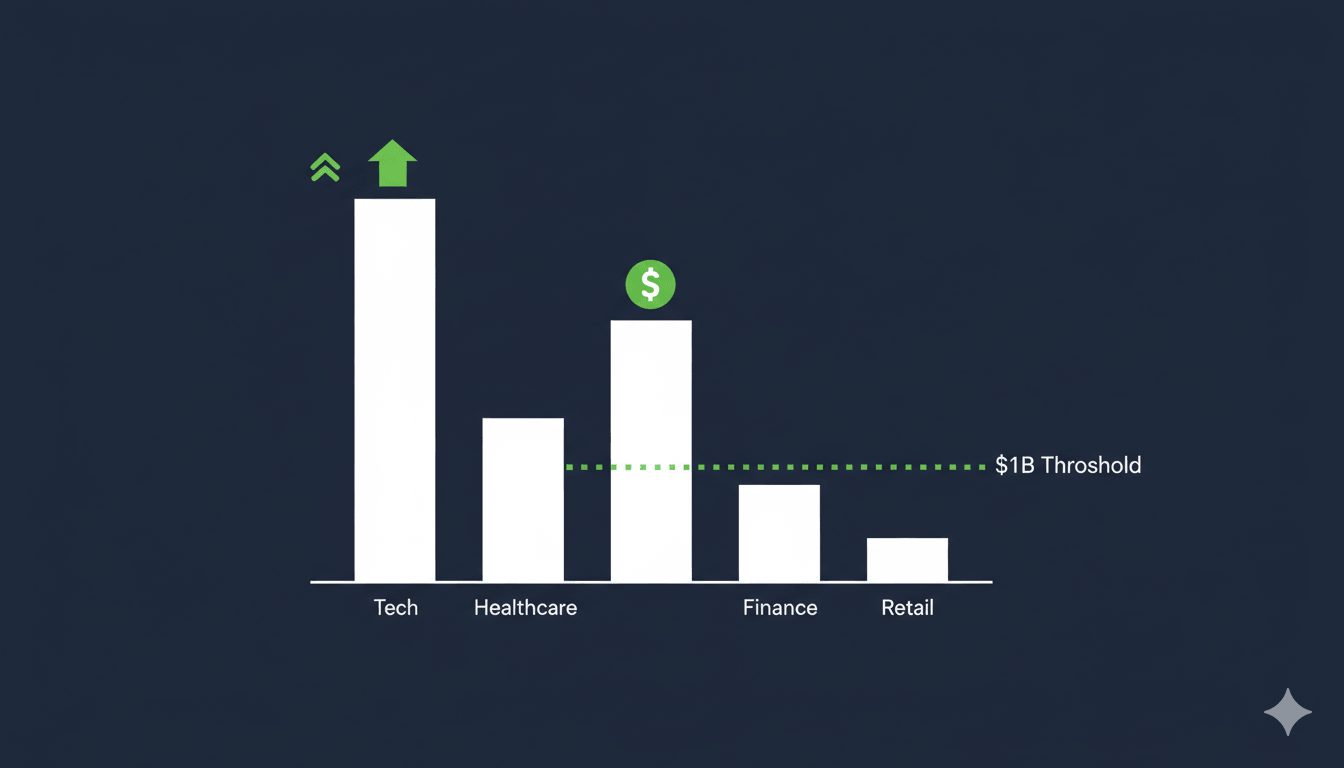

Capital is harder to raise without evidence. The era of funding ideas on a napkin is largely over. Investors at every stage — from angels to Series A — increasingly want to see evidence of market demand before committing capital. Waitlist signups, paid pilots, conversion data from landing pages, letter of intent from potential customers — these are the currencies of credibility in 2026's fundraising environment.

Customer attention is the scarcest resource. People are bombarded with new products, tools, and services constantly. If your product doesn't solve a burning problem for a specific group of people, it will be ignored regardless of how well it's built. Validation ensures you're building something that earns attention rather than begging for it.

The cost of failure compounds. It's not just the money you spend building. It's the team morale that erodes when you realize nobody wants what you made. It's the months of runway you burned. It's the co-founder relationship that strains under the weight of a product nobody uses. Validation prevents all of this.

The Market Validation Framework: 7 Methods From Cheapest to Most Conclusive

Not all validation methods are equal. Some are quick and cheap but provide weak signals. Others require more effort but give you near-certainty about market demand. The best approach is to start with lightweight methods and escalate to heavier ones as your confidence grows.

Here's the framework, ordered from least expensive to most conclusive.

Method 1: Problem Discovery Interviews

Cost: Free (just your time) Time: 1-2 weeks Signal strength: Moderate — validates the problem, not the solution

This is where every validation process should start. Before testing whether people want your product, you need to confirm that the problem you're solving actually exists, that it's painful enough to motivate action, and that it's frequent enough to sustain a business.

How to do it:

Identify 15-20 people in your target audience. These should be people who you believe experience the problem you want to solve. Find them through your network, LinkedIn, relevant online communities (Reddit, Slack groups, Discord servers, industry forums), or even cold outreach.

Schedule 20-30 minute conversations. Don't pitch your idea. Instead, ask about their workflows, frustrations, and current solutions. The goal is to understand their world, not to sell them on yours.

Questions that actually work:

"Walk me through how you handle [the process your product addresses] today." This reveals their current workflow and where the pain points live.

"What's the most frustrating part of that process?" This identifies whether the specific pain point you're targeting is actually the one that bothers them most. Often, it isn't — and that's incredibly valuable to learn early.

"Have you tried any tools or solutions to fix this? What happened?" This reveals the competitive landscape from the customer's perspective. If they've tried three tools and abandoned all of them, that tells you something different than if they've never looked for a solution.

"If you could wave a magic wand and fix one thing about this, what would it be?" This open-ended question often surfaces needs you hadn't considered.

"How much time or money does this problem cost you per month?" This quantifies the pain. If the answer is "maybe 10 minutes a month," the problem might not be big enough to build a business around. If it's "we lose about $5,000 a month to this," you're onto something.

What constitutes a positive signal:

At least 10 out of 15 interviewees independently confirm the problem exists and is a meaningful frustration. Multiple people describe the same pain point using similar language (this becomes your marketing copy later). At least some interviewees have actively tried to solve the problem, indicating willingness to take action. People express emotional intensity when describing the problem — frustration, annoyance, resignation. Lukewarm responses are a negative signal.

What constitutes a negative signal:

People acknowledge the problem but rank it low on their list of priorities. Most interviewees have a "good enough" solution they're satisfied with. The problem exists but it's not frequent enough to drive regular usage of a product. Nobody has ever tried to solve it, which might mean it's not painful enough to motivate action.

The Mom Test rule: Named after Rob Fitzpatrick's book, the core principle is that you should never tell people about your idea during discovery interviews. The moment you pitch, the conversation shifts from honest exploration to polite feedback. People will tell you your idea is great because they don't want to hurt your feelings. Instead, ask about their life, their problems, and their behavior. The truth lies in what they do, not what they say they'd do.

Method 2: Demand Signal Analysis

Cost: Free to minimal Time: A few hours to a day Signal strength: Moderate — validates that people are searching for solutions

Before talking to anyone, you can learn a lot by analyzing existing demand signals. This means looking at whether people are already actively searching for solutions to the problem you want to solve.

Tools and techniques:

Google Trends and keyword research. Search for terms related to your problem space. Are people googling "how to [solve the problem you address]"? Is search volume growing or declining? Use Google Keyword Planner, Ahrefs, or Ubersuggest to estimate monthly search volume for relevant keywords.

Reddit and forum mining. Search Reddit, Quora, Hacker News, and industry-specific forums for discussions about your problem. How many people are asking about it? How recent are the threads? What language do they use to describe the pain? This is some of the richest qualitative data you can find — real people describing real frustrations in their own words.

Competitor analysis. If competitors exist, that's actually a positive signal. It means the market is real and people are paying for solutions. Analyze their traffic (SimilarWeb), their reviews (G2, Capterra, App Store), and their pricing. Pay special attention to negative reviews — they reveal unmet needs that your product could address.

Social listening. Monitor Twitter/X, LinkedIn, and relevant Slack communities for people complaining about the problem. Tools like SparkToro can help you understand where your target audience spends time online and what topics they care about.

Community engagement metrics. If there are subreddits, Facebook groups, or Discord servers dedicated to the topic, how active are they? A subreddit with 50,000 members and daily posts is a much stronger signal than one with 2,000 members and a post per week.

Benchmarks: If you find more than 1,000 monthly searches for problem-related keywords, active community discussions with hundreds of engaged participants, and at least 2-3 existing competitors with paying customers, the demand signal is strong enough to move to the next validation stage.

Method 3: The Landing Page Test (Smoke Test)

Cost: $100-500 (page builder + ad spend) Time: 1-2 weeks Signal strength: Strong — measures actual behavior, not just opinions

This is the first method that tests real behavior rather than stated interest. The concept is simple: create a landing page that describes your product as if it already exists, drive traffic to it, and measure how many people take action (sign up for a waitlist, request early access, or attempt to purchase).

How to execute it:

Build the page. Use Carrd ($19/year), Webflow, or even a simple HTML page. Include a clear headline describing the core value proposition, a 2-3 sentence explanation of what the product does, 3-4 key features or benefits, social proof if you have any (even "from the makers of..." works), and a single call-to-action: "Join the waitlist," "Get early access," or "Sign up for launch notification."

Drive cold traffic. This is critical — the traffic must be cold (people who don't know you). Warm traffic from your personal network will always convert higher and give you a false positive. Run Google Ads targeting problem-related keywords ($5-15/day is enough), Facebook or Instagram ads targeting your demographic ($5-10/day), or post in relevant communities (if you can do so authentically without spamming).

Measure conversion rate. Track how many visitors land on the page and how many take the desired action.

Benchmarks for landing page tests:

Conversion Rate | Interpretation |

|---|---|

5%+ | Strong demand signal — proceed with confidence |

2-5% | Moderate signal — worth exploring further, possibly iterate on messaging |

1-2% | Weak signal — the problem might not be urgent enough, or your positioning is off |

Below 1% | Very weak — reconsider the idea or radically change the value proposition |

Real example — Buffer: Joel Gascoigne validated Buffer (the social media scheduling tool) with a two-page website. The first page described the product concept and had a "Plans and Pricing" button. The second page showed pricing tiers and asked for an email address. He drove traffic from a few tweets and blog posts. When people clicked through to pricing and entered their email, Gascoigne knew the interest was real — people weren't just curious about the concept, they were interested enough to evaluate pricing. This took him from idea to validated demand in seven weeks.

Pro tips:

Test multiple value propositions by running 2-3 landing page variants with different headlines. The version that converts highest tells you which angle resonates most with your market.

Don't over-design the page. You're testing the idea, not your design skills. A clean, simple page with clear copy is all you need. Over-optimization (countdown timers, exit-intent popups, urgency triggers) actually hurts your signal quality — you want to know if the idea is compelling, not whether your conversion tactics work.

Use cold traffic only for your primary measurement. You can share with your network too, but track those conversions separately.

Method 4: The Concierge MVP

Cost: Free to minimal (your time) Time: 2-4 weeks Signal strength: Very strong — tests willingness to use and pay

A concierge MVP means delivering your product's core value manually to a small group of customers, without building any technology. You're the product. This method validates not just demand but also whether your solution actually works — whether customers experience the value you're promising.

How it works:

Find 5-10 people from your discovery interviews who expressed the strongest pain around the problem. Offer to solve their problem manually, exactly as your product would, but using spreadsheets, email, phone calls, or whatever manual process is required.

Real example — Food on the Table: The meal planning app started as a concierge service. Founder Manuel Rosso personally met with a single mother, learned about her family's dietary preferences, checked local grocery store sales, and created a custom meal plan with a shopping list. He did this manually for weeks. When the customer found it genuinely valuable and was willing to pay, he knew the concept worked. Only then did he start building the app.

Real example — Zappos: Nick Swinmurn didn't build an e-commerce platform. He photographed shoes at local stores, posted them online, and when someone ordered a pair, he went to the store, bought the shoes at retail price, and shipped them. He lost money on every transaction, but he proved that people would buy shoes online — which was the hypothesis everyone doubted.

What makes this method so powerful:

You learn exactly what customers value and what they don't, because you're watching them use the service in real time. You discover operational complexities that wouldn't surface in a survey or landing page test. You can iterate instantly — if something doesn't work, you adjust the service tomorrow. You build deep relationships with your first customers, who often become your most vocal advocates.

When to use it: When your product involves a complex workflow, when you need to understand nuances of the user experience before building, or when you want to validate willingness to pay (charge a discounted rate for the manual service).

Method 5: The Wizard of Oz MVP

Cost: Low to moderate Time: 2-4 weeks Signal strength: Very strong — users believe the product is real

Similar to the concierge MVP, but with a key difference: the customer thinks they're using a real, automated product, while you're actually performing the work manually behind the scenes.

How it works:

Build a simple front-end — a basic website or app interface — that looks like a functioning product. When a user submits a request, you manually process it and deliver the result as if the system did it automatically.

When to use it: When you need to test whether users will engage with a product interface (not just a service), when the automation is the expensive part and you want to validate demand before investing in it, or when you need unbiased feedback (concierge customers know it's manual, which can bias their responses).

Example: A founder building an AI-powered resume reviewer could create a simple upload form. When candidates submit their resume, the founder personally reviews it and sends feedback styled as an automated report. If users find the feedback valuable and return for more, the concept is validated — then the AI automation is worth building.

Method 6: Pre-Sales and Crowdfunding

Cost: Moderate (campaign creation) Time: 4-8 weeks Signal strength: Very strong — money is the ultimate validation

Nothing validates demand like people paying you actual money for something that doesn't exist yet. Pre-sales and crowdfunding campaigns prove willingness to pay in the most direct way possible.

Options:

Direct pre-orders. Add a "Pre-order now" or "Buy now — ships in Q2" button to your landing page. If people enter their credit card information (even if you don't charge them yet), that's the strongest signal short of actual revenue.

Crowdfunding campaigns. Platforms like Kickstarter and Indiegogo let you sell a product before it exists. The barrier is higher — you need compelling marketing materials, a video, and a credible timeline — but a successful campaign simultaneously validates demand, generates capital, and builds an audience.

Paid pilots. For B2B products, offer a small number of companies a discounted "pilot program" where they pay a reduced rate in exchange for early access and influence over the product roadmap. Even $500/month from three pilot customers is powerful validation.

Benchmarks:

Any paying customers before you've built the product is a strong positive signal. For crowdfunding, reaching your funding goal within the first 48 hours typically predicts campaign success. For B2B paid pilots, getting 3-5 companies to sign letters of intent (LOIs) or commit to paid pilots validates the concept convincingly enough for most seed investors.

Real example — Oculus: The Oculus Rift launched a Kickstarter campaign with just a prototype headset and a demo video. No finished consumer product existed. The campaign raised $2.4 million from nearly 10,000 backers — so much demand that Facebook acquired the company for $2 billion before the consumer product even shipped. The Kickstarter campaign was the market validation.

Method 7: The Micro-Launch

Cost: Low to moderate Time: 1-2 weeks Signal strength: Strong — tests real-world engagement

A micro-launch means releasing a bare-bones version of your product (or even just a detailed description) to a targeted community and measuring the response. This is different from a landing page test because you're engaging directly with a community that can ask questions, give feedback, and share your product.

Platforms for micro-launches:

Product Hunt. A strong Product Hunt launch can generate thousands of visitors, hundreds of signups, and valuable feedback within 24 hours. It's particularly effective for developer tools, SaaS products, and consumer apps.

Hacker News (Show HN). Great for technical products. The HN audience is critical but fair — if your product resonates, the feedback is invaluable. If it doesn't, you'll know quickly.

Reddit. Subreddits dedicated to your target audience (r/SaaS, r/startups, r/smallbusiness, industry-specific subreddits) can generate honest feedback and early adopters.

Indie Hackers. The community is highly supportive of new products and provides both feedback and signal about market interest.

What to measure: Number of signups or purchases in the first 48 hours, quality and sentiment of comments and feedback, whether the post gains organic traction (upvotes, shares) beyond your initial promotion, whether people ask "How do I sign up?" or "When can I buy this?" — unprompted purchase intent is the strongest signal.

The Validation Scorecard: Making a Go / Pivot / Stop Decision

After running through multiple validation methods, you need a structured way to synthesize your findings. Here's a scorecard framework you can use.

Score each dimension from 1 (weak) to 5 (strong):

Problem intensity (weight: 25%). Did interviewees describe the problem as a significant frustration? Did they use emotional language? Have they actively tried to solve it before?

Solution resonance (weight: 25%). When people saw your landing page, concierge service, or product description, did they engage? Was the conversion rate above 3%? Did they say "I need this" or "Where do I sign up?"

Willingness to pay (weight: 25%). Did anyone offer to pay during interviews? Did pre-orders or pilot commitments materialize? Did people click through to pricing? Was there actual money exchanged?

Market accessibility (weight: 25%). Can you reach your target customers through affordable channels? Are there communities, keywords, or distribution channels that give you access? Is the cost per click / cost per lead sustainable?

Interpreting your score:

Weighted Score | Verdict | What to Do |

|---|---|---|

4.0–5.0 | GO | Build with confidence. You have strong evidence of demand. |

3.0–3.9 | ITERATE | Promising but not conclusive. Run one more validation cycle with adjusted positioning or a different segment. |

2.0–2.9 | PIVOT | The core problem might be real, but your approach needs significant change. Consider a different solution, a different audience, or a different business model. |

Below 2.0 | STOP | The evidence suggests this isn't worth pursuing in its current form. Save your resources for a better opportunity. |

Market Validation Mistakes That Cost Founders Months

Asking Friends and Family

Your friends will tell you your idea is great because they like you. Your mom will tell you it's brilliant because she's your mom. This isn't validation — it's emotional support. Only feedback from people who have no social incentive to be nice counts. Use cold traffic, strangers in your target market, and people who have no idea who you are.

Confusing Interest With Intent

"Oh, that's a cool idea" is not validation. "Where do I sign up?" is validation. "I'd probably use that" is not validation. "Can I pay you to do this for me right now?" is validation. The gap between stated interest and actual behavior is enormous. Always design your validation tests to measure behavior (clicks, signups, payments) rather than opinions.

Validating the Problem but Not the Solution

Many founders do excellent discovery research, confirm the problem is real, and then assume their specific solution is the answer. These are two separate hypotheses. The problem might be real, but your approach to solving it might not resonate. Always test the solution concept separately from the problem.

Over-Building Before Validating

The instinct to build is strong, especially for technical founders. "Let me just build a quick prototype" turns into three months of development before anyone has tested whether the market wants it. Force yourself to validate before building. Every validation method in this guide can be executed without writing a single line of code.

Validating Once and Stopping

Markets evolve. Customer needs shift. Competitors launch. Validation isn't a one-time checkbox — it's an ongoing discipline. Even after launch, you should continuously validate new features, new segments, and new directions before committing significant resources.

Ignoring Negative Signals

Confirmation bias is the founder's worst enemy. When 12 out of 15 interviewees tell you the problem isn't that important to them, the right response is "this might not be the right idea" — not "those 3 people who agreed are my real target market." Be honest with yourself about what the data says.

A 30-Day Market Validation Sprint

If you want a concrete timeline, here's a proven sprint you can execute in one month.

Week 1: Research and Discovery. Conduct demand signal analysis (keyword research, Reddit mining, competitor analysis). Reach out to schedule 12-15 discovery interviews. Begin interviewing by end of week.

Week 2: Complete Interviews and Analyze. Finish all discovery interviews. Synthesize findings: What's the real problem? Who feels it most? What language do they use? Make a go/no-go decision on whether to proceed to solution testing.

Week 3: Landing Page Test. Build a landing page describing your solution. Launch Google and/or social ads targeting cold traffic. Monitor signups and conversion rate daily. Begin testing with 2-3 different headlines if budget allows.

Week 4: Deepen Validation. If landing page conversion exceeded 3%, reach out to signups for deeper conversations. Offer a concierge version of the service to 5-10 interested people. Test willingness to pay (even at a discount). Score your results on the validation scorecard and make your Go / Pivot / Stop decision.

Total cost: $200-$500 in ad spend, plus your time. That's all it takes to avoid building something nobody wants.

How WorthBuild Accelerates Your Validation

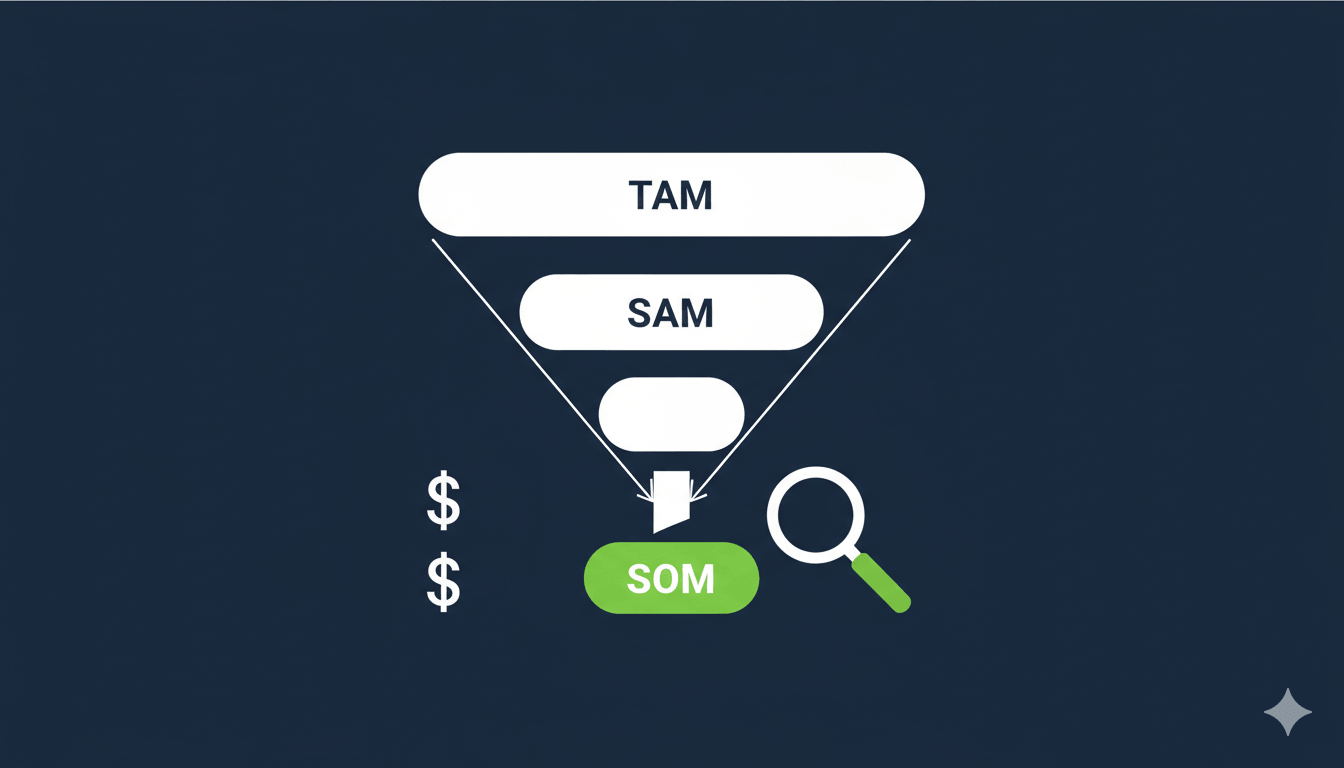

The validation sprint above takes about 30 days. WorthBuild compresses the research phase — demand signal analysis, competitor mapping, market sizing, and audience identification — into about two minutes.

When you describe your startup idea, WorthBuild generates a comprehensive validation report using real market data from search trends, community discussions, startup launch platforms, developer ecosystems, and funding databases. The report includes a Go / Pivot / Stop verdict with confidence scoring, competitor analysis with strengths and gaps, TAM / SAM / SOM market sizing, unit economics and financial projections, risk assessment across market, technology, and execution dimensions, and a "Your First Customers" list — real people in online communities who are actively describing the problem your idea solves, with personalized outreach messages.

The research phase that would normally take your entire first week is handled automatically, so you can jump straight to interviews and landing page tests with a clear understanding of the market landscape.

You can validate one idea per month for free — no credit card required. Try it at worthbuild.io.

Tools for Market Validation in 2026

You don't need expensive tools to validate your idea, but the right ones can save you significant time. Here's what works at each stage.

For Discovery Research

SparkToro helps you understand where your target audience hangs out online, what they read, who they follow, and what language they use. This is invaluable for knowing where to find interview subjects and where to run ads later.

Google Keyword Planner and Ubersuggest give you search volume data for problem-related keywords. If 10,000 people per month search for "how to [solve the problem you address]," that's a quantifiable demand signal.

Reddit Search and GummySearch let you mine Reddit for discussions about your problem space. GummySearch specifically is built for startup founders — it surfaces pain points, solution requests, and spending signals from subreddit conversations.

For Landing Page Tests

Carrd ($19/year) is the fastest way to build a clean, single-page landing page. It takes less than an hour to get something live.

Google Ads is the best source for cold traffic testing because people are actively searching for solutions. A $10/day budget for 7 days ($70 total) will give you enough data to measure conversion rates for most niches.

Plausible or Fathom for privacy-friendly analytics that give you accurate visitor counts without the complexity of Google Analytics.

For Concierge and Manual MVPs

Notion or Airtable for managing your manual service delivery — tracking customers, requests, and outcomes.

Calendly for scheduling calls and check-ins with your concierge customers.

Stripe for accepting payments, even for manual services. Charging early, even a small amount, validates willingness to pay.

For Comprehensive Validation

WorthBuild generates a full validation report in 2 minutes — competitor analysis, market sizing, demand signals, risk assessment, and potential first customers — replacing days of manual research. Especially useful as a starting point before you invest in interviews and landing page tests.

Frequently Asked Questions

How much money do I need to validate a startup idea?

You can validate most ideas for under $500. Discovery interviews are free. A landing page costs $19-50 to build. A week of Google Ads testing costs $70-150. The biggest investment is your time — expect to spend 30-50 hours over a month on a thorough validation sprint. That's a tiny fraction of what you'd spend building a product nobody wants.

How many customer interviews do I need?

Plan for 15-20 interviews. Research consistently shows that patterns emerge after 10-12 conversations, and by 15-20 you'll have strong confidence in your findings. If you're hearing the same things repeated after 10 interviews, you've likely reached saturation. If every interview surfaces new information, keep going.

What if I validate the problem but my solution doesn't resonate?

That's actually a great outcome — it means you've confirmed a real market need, you just need a different approach to solving it. Go back to your interview notes and look at how people currently solve the problem, what they wish existed, and what specific words they use to describe their ideal solution. Often, the right solution is hiding in your research — you just need to reframe your approach.

Should I validate if there are already competitors in my market?

Absolutely. Competitors are a positive signal — they prove the market exists and people pay for solutions. Your validation should focus on whether your specific differentiation matters to customers. Are you faster, cheaper, simpler, better designed, more specialized? Test whether your unique angle resonates, not whether the general problem is real (your competitors already proved that).

What if my idea is so new that nobody is searching for it?

This is common with innovative products. If nobody is searching for your solution, search for the problem instead. People weren't searching for "ride-sharing apps" before Uber existed, but they were searching for "cheap taxi alternatives" and complaining about cab service on forums. Focus your validation on the underlying pain, not the product category.

Can I skip validation if I'm building for myself?

Building a product you personally need (dogfooding) gives you one validated data point — yourself. That's a start, but it's not enough. You need to confirm that enough other people share your problem, experience it with the same intensity, and would pay for a solution. Many founders build products they personally love that nobody else cares about. Always validate beyond your own experience.

Key Takeaways

Market validation is the process of testing whether real people have a genuine need for your product before you invest significant resources building it. Skipping it is the number one reason startups fail.

Start with cheap, fast methods (discovery interviews, demand signal analysis) and escalate to higher-conviction methods (landing page tests, concierge MVPs, pre-sales) as your confidence grows.

Always measure behavior, not opinions. Clicks, signups, and payments are real signals. "That's a cool idea" is not.

Use the validation scorecard to make a structured Go / Pivot / Stop decision based on four dimensions: problem intensity, solution resonance, willingness to pay, and market accessibility.

A complete validation sprint can be done in 30 days for under $500. There's no excuse for building blind.

Validation isn't a one-time event. Markets change, competitors launch, and customer needs evolve. Build validation into your ongoing practice, not just your pre-launch process.

The cheapest time to discover your idea won't work is before you've written any code. Validate first. Build second.

Ready to validate your startup idea? Try WorthBuild free — get a data-backed market validation report with competitor analysis, market sizing, and your first potential customers in 2 minutes.